The answer Terraform. With Terraform we make conditions for resources in an infrastructure provided with modules and provider. To use resource in Open Telekom Cloud there is a Terraform Provider Opentelekomcloud . Provide resource definition like VPC:

resource "opentelekomcloud_vpc_v1" "vpc" {

name = var.environment

cidr = var.vpc_cidr

shared = true

}

There are also things for Subnet, EIP, ECS, EVS etc.. And you can combine resources with each other. A Subnet needs a VPC-ID for example:

resource "opentelekomcloud_vpc_subnet_v1" "subnet" {

name = var.environment

vpc_id = opentelekomcloud_vpc_v1.vpc.id

cidr = var.subnet_cidr

gateway_ip = var.subnet_gateway_ip

primary_dns = var.subnet_primary_dns

secondary_dns = var.subnet_secondary_dns

}

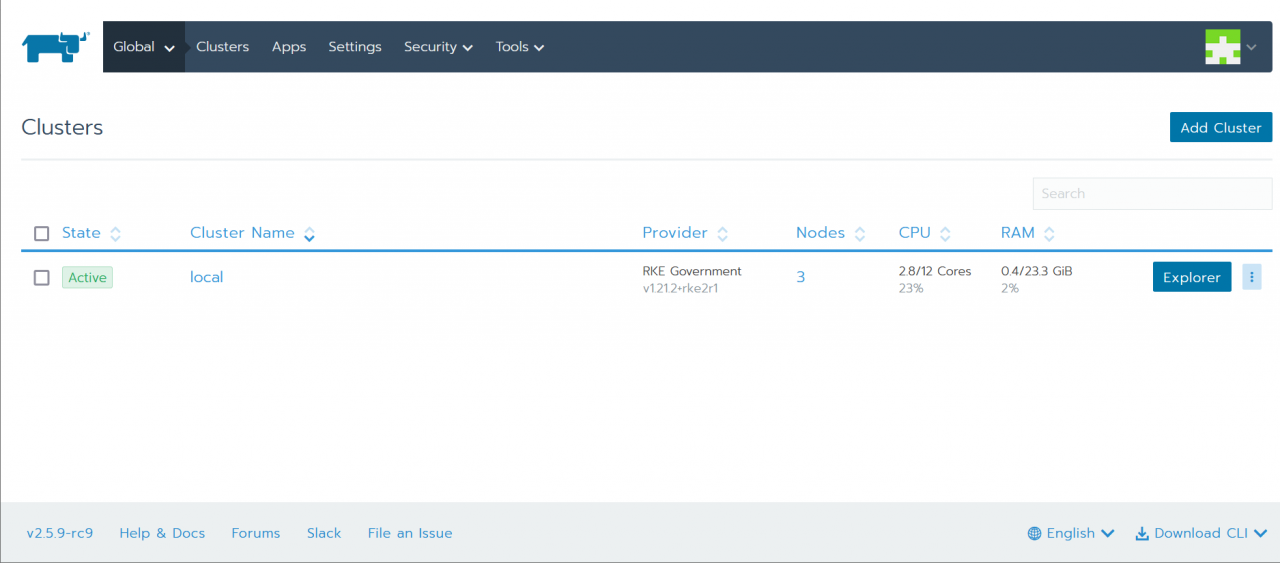

At the there is a substructure for Kubernetes Cluster with Rancher. The logic is provided by cloud-init simple as shell script injected in the VM and exec locally.

K3S https://github.com/eumel8/tf-k3s-otc In this reporitory are the resource definition in Terraform to install a K3S cluster in Open Telekom Cloud.

advantages:

disadvantages:

RKE2 https://github.com/eumel8/tf-rke2-otc In this repository is a description for a Terraform deployment in OTC, which creates a Kubernetes Cluster based on RKE2 for Rancher.

advantages:

disadvantages:

The advantage of both methods is the “one-binary”-concept. There is not so much preparation on the operating system level. In the same way like the installation is the deleting included with a binary as part of the package. The overall impression of both is also the uncomplicated handling. A disadvantage is maybe the vendor-lock-in. Both projects are Open Source, but the developement is leaded by Rancher, which is now part of SUSE company. The new product name is SUSE Rancher